What’s going on:

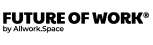

Europe is paving the way for how countries go about regulating Artificial Intelligence (AI). A committee of lawmakers in the European Parliament recently approved regulation, known as the European AI Act, according to CNBC. This piece of legislation, now closer to becoming law, is meant to provide a regulatory framework for AI systems, which have been evolving rapidly with companies like Microsoft-backed OpenAI and Google developing advanced AI technologies such as ChatGPT and Bard, respectively.

Why it matters:

The European AI Act would establish rules and regulations for companies developing AI, and for organizations using AI.

CNBC reports that the AI Act categorizes applications into four varying levels of risk: No risk, limited risk, high risk, and unacceptable risk. If an AI program is categorized under unacceptable risk, it is banned by default.

The proposed European legislation ensures that developers of AI systems stay within legal guardrails. Experts say that the goal of this legislation is to protect jobs currently held by skilled workers. The legislation also addresses key concerns about potential biases in AI systems and the use of AI in sensitive areas like law enforcement, border management, and education.

How it’ll impact the future:

More and more companies worldwide are using artificial intelligence systems and concerns are growing that skilled workers may be displaced as these systems become more advanced.

By regulating AI development and deployment, the European AI Act aims to strike a balance between utilizing the benefits of AI technology and also mitigating potential risks to society. Other countries outside the European Union may be influenced by this landmark piece of legislation when they develop their own AI laws and regulations.